About Me

Hi, There👋! I am an undergraduate student at Harbin Institute of Technology (Shenzhen), working on Trustworthy Multimodal AI and Adaptive, Data-Efficient Learning.

My previous research focuses on robust and reliable multimodal model adaptation under distribution shift, especially for test-time adaptation and hallucination mitigation in vision-language systems.

I am currently diving into world models and embodied AI, aiming to help build more intelligent and capable robotic systems.

News

- 2026.05: 🎉🎉 Two paper were accepted by ICML 2026, Congrats !! 🥳

- 2026.02: 🎉🎉 One paper “Do All Individual Layers Help?”, was accepted by CVPR 2026 Findings.

- 2026.02: 🎉🎉 One paper “Test-Time Distillation for Continual Model Adaptation”, was accepted by CVPR 2026 Findings.

- 2025.11: 🎉🎉 Recognized as one of the ‘Top Ten Outstanding College Students’ at Harbin Institute of Technology (Shenzhen)

- 2024.10: 🎉🎉 Awarded the Chinese National Scholarship.

Publications

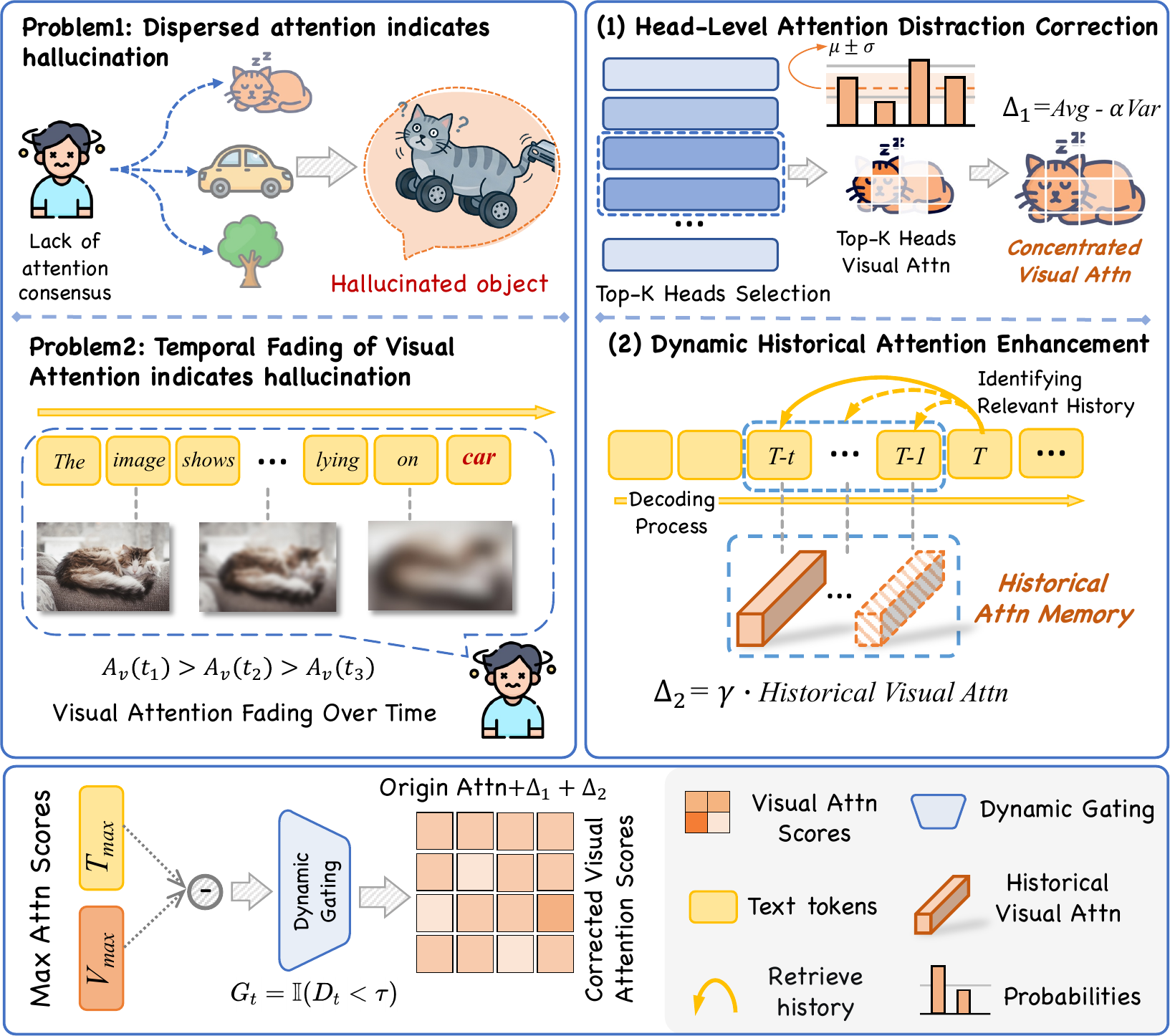

Correcting Visual Blur Induced by Attention Distraction to Reduce Hallucinations: Algorithm and Theory

Quanjiang Li†, Zhiming Liu†, Wei Luo, Tingjin Luo, Chenping Hou

- We identify the link between human-like attention distraction and object hallucinations in multimodal models, and propose AFIP, a training-free method that corrects spatial and temporal attention dispersion to enhance visual grounding without additional training.

- † indicates equal contribution (co-first authors).

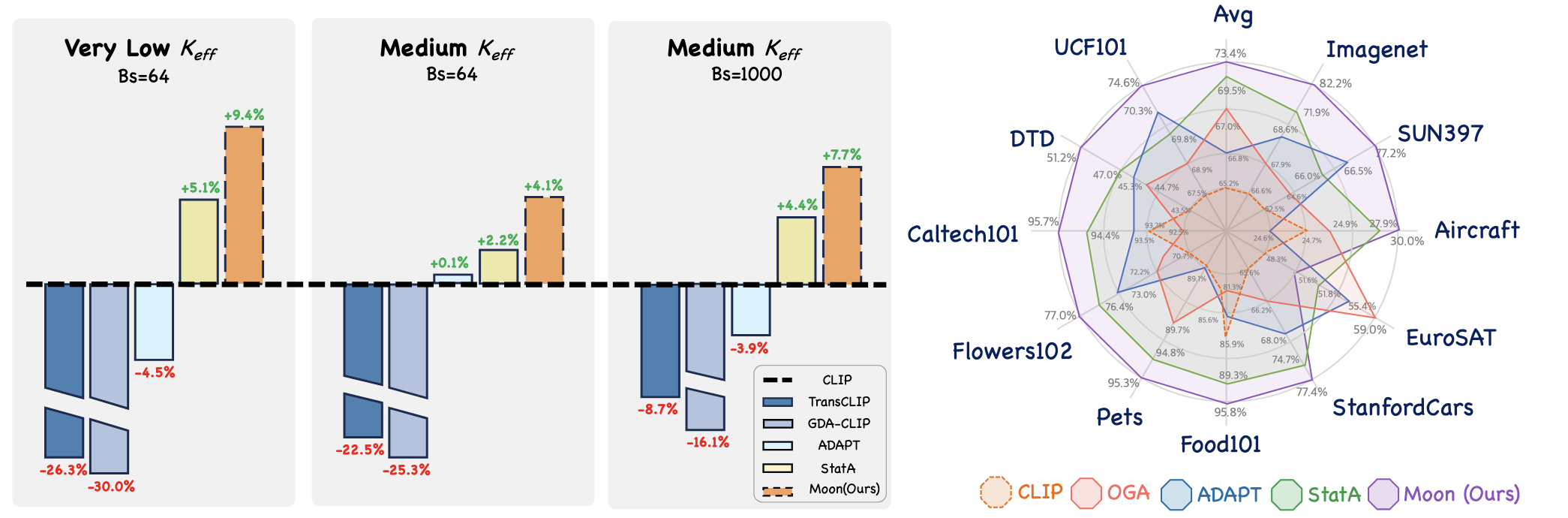

Von Mises-Fisher Mixture Model with Dynamic Shrinkage for Realistic Test-Time Transduction

Jiazhen Huang, Zhiming Liu, Changhu Wang, Wei Ju, Ziyue Qiao, Xiao Luo

- We identify the brittleness of transductive methods under imbalanced distributions and propose MOON, a training-free, model-agnostic framework that dynamically adjusts shrinkage strength to mitigate negative transfer and enhance VLM performance without retraining.

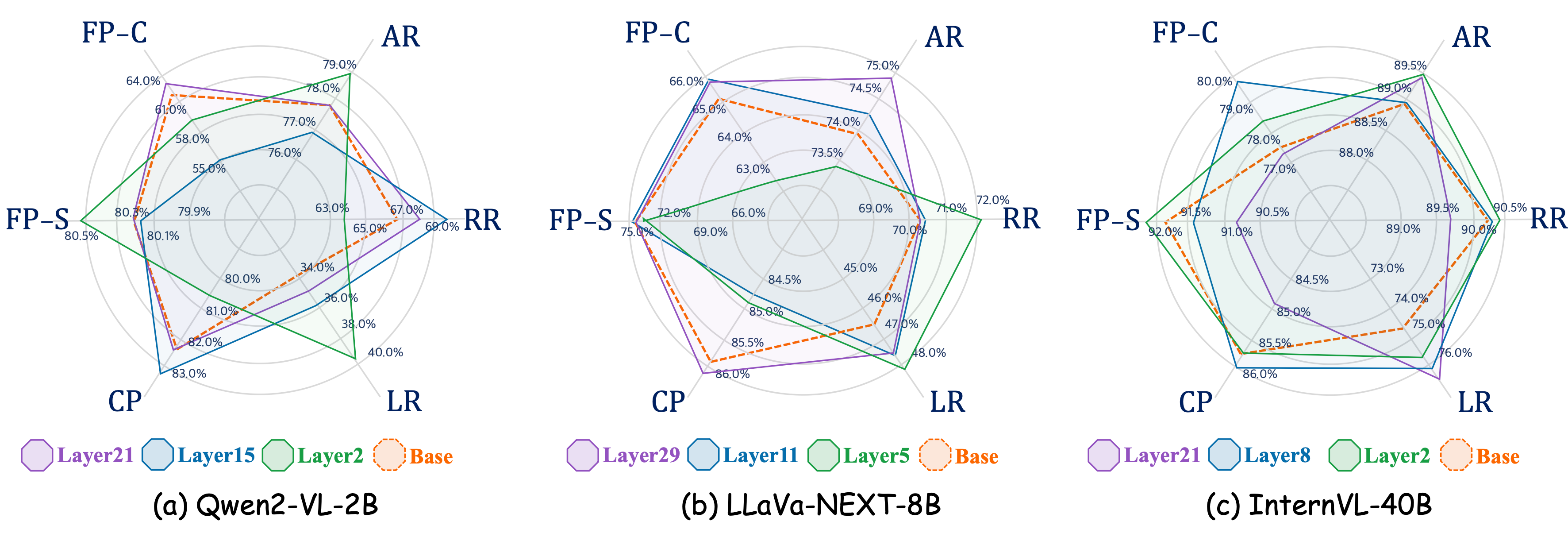

Zhiming Liu, Yujie Wei, Lei Feng, Xiu Su, Xiaobo Xia, Weili Guan, Zeke Xie, Shuo Yang

- We identify task-interfering layers in vision-language models and propose a lightweight test-time intervention strategy that improves downstream few-shot reasoning without retraining.

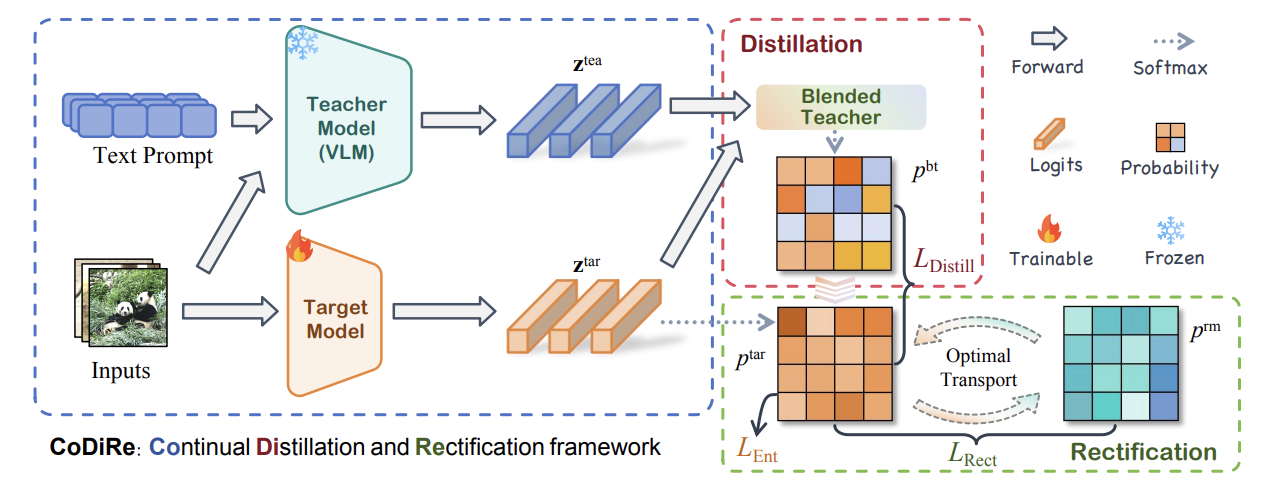

Test-Time Distillation for Continual Model Adaptation

Xiao Chen†, Jiazhen Huang†, Zhiming Liu, Qinting Jiang, Fanding Huang, Jingyan Jiang, Zhi Wang

- We propose a collaborative test-time distillation framework for continual model adaptation that improves robustness and generalization under realistic distribution shifts.

- † indicates equal contribution (co-first authors).

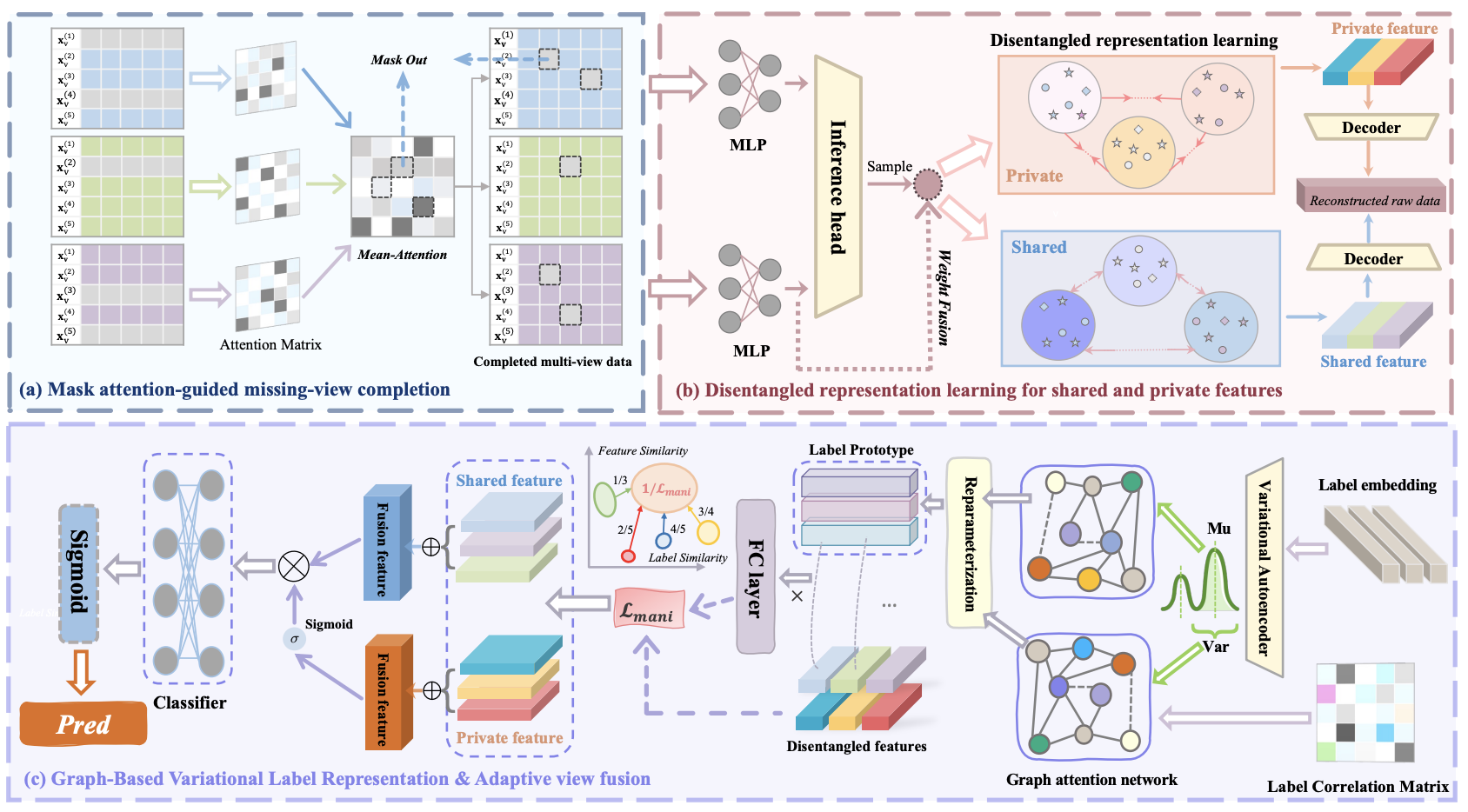

Adaptive Disentangled Representation Learning for Incomplete Multi-View Multi-Label Classification

Quanjiang Li†, Zhiming Liu†, TianxiangXu†, Tingjin Luo, Chenping Hou

- We proposed ADRL, a novel framework that jointly addresses structural distortion and semantic ambiguity in incomplete multi-view settings by integrating label-guided feature disentanglement and category-aware embedding interaction.

- † indicates equal contribution (co-first authors).

Honors and Awards

- 2024: Finalist Award in the Mathematical Contest in Modeling (MCM)

- 2024: Chinese National Scholarship

- 2024: First Prize Scholarship at Harbin Institute of Technology (Shenzhen)

- 2025: National Second Prize, Global Campus Artificial Intelligence Algorithm Elite Competition 2025

- 2025: Top Ten Outstanding College Students of Harbin Institute of Technology (Shenzhen)

- 2025: First Prize Scholarship at Harbin Institute of Technology (Shenzhen)

Research Project

Educations

Experience